ACES

Academy Color Encoding System

CG OCIO #colour

Official site ACES Docs ACES in visual effects

Quick links: #ACES2065-1 (AP0 primaries) · #ACEScg (AP1 primaries) · #Terms · #ACES process · #Values

Why use ACES

For CG or digital artists, the primary reason they would want to use ACES is their final renders/output look and feel richer, and more photo-realistic. The realism is due to the extended dynamic range that ACES provides. Your final images/renders look and feel much closer to what one would sees with their own eyes, and are visibly superior to typical renders. There are three important benefits that come from this:

- Highlights - Because of the increased dynamic range your highlights and shadows will have more detail. It takes a much more extreme light or camera exposure to get pure white clipping to be visible in your renders.

- Colors - Colors will desaturate as they are lit by brighter and brighter lights sources, just like a you as the human eye would sees perceives them in real life.

- Effort - Digital artists tend to have a much easier time achieving accurate, photo-real renders images using ACES than with other colorspaces. They don't have to spend as much time fiddling with light strengths to avoid clipping and can just use strengths that are more realistic/accurate. Because of this they attain results with less (or equal) effort with better results.

- It’s an archival format…. (or should this point go into an area explaining the popular ACES color-spaces ?)

ACES colourspaces

Right now there are only a handful of different ACES colorspaces. Each is different and has specific scenarios governing when you would want to use them.

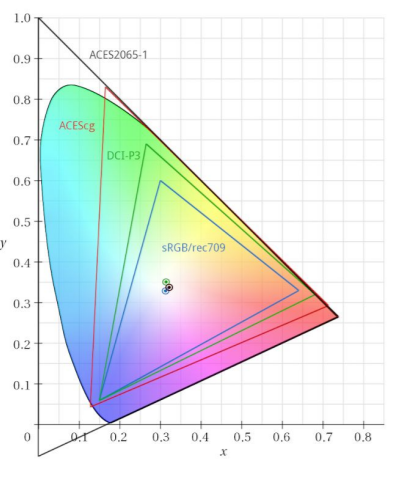

ACES2065-1 (AP0 primaries)

ACES2065-1 can also seen by the name AP0 (ACES Primaries 0). It has the widest gamut of all the ACES colorspaces fully encompassing the entire visible spectrum. (It has a huge gamut, with primaries that lie outside of the visible spectrum) You will almost never work in this colorspace it is meant primarily to be a transfer and archival format. Typically, this is the colorspace you would use to transfer images/animations between production studios.

White point is D60 - x = 0.32168 and y = 0.33767

ACEScg (AP1 primaries)

ACEScg is the colorspace a CG artist will be using. It is "scene-referred" or linear. It doesn't have as wide a Color gamut as ASES2065-1 but it is far larger than most other colorspaces one might use and has an enormous dynamic range. In some software ACEScg is also referred to as AP1, which stands for “ACES Primaries 1”.

ACEScg white point is D60 (sRGB is D65)

ACEScc & ACEScct (AP1 primaries)

ACEScc and ACEScct are primarily used for color grading. This is not a linear colorspace and maps black at 0 and white at 1. ACEScct is similar to ACEScc except it has a "toe" or a gamma curve in the dark values of the image. This allows grading/editing software to behave and feel like it did while working in other colorspaces making some colorists very happy.

ACESproxy (AP1 primaries)

ACESproxy is primarily used for camera playback and video displays. Like ACEScc and ACEScct, ACESproxy has a non-linear transform function and maps black at 0 and white at 1.

Terms

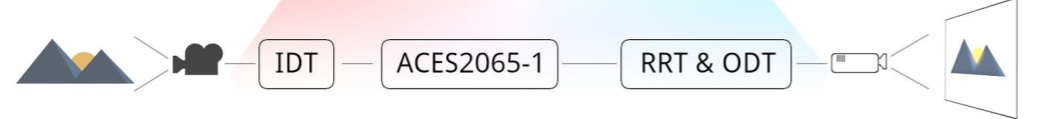

IDT

The Input Device Transform is the transform used to convert the pixel colors of images/videos from specific devices into an ACES colorspace.

RRT

The Reference Rendering Transform prepares scene referred linear data into high dynamic range display referred data. This data is then meant to be handed over to an ODT to convert the data to be viewed in a specific display type.

ODT

The Output Display Transform is responsible for converting the data created by the RRT to data that can be viewed on specific devices or color-spaces: sRGB, P3, Rec. 709.

ACES process

Simplified ACES proceess

First we have a scene, can be anything, really, that's shot with a camera. The data from the camera is then converted to ACES using the IDT: the Input Device Transform. Basically that a LUT transforming the data from camera color space to the ACES storage color space. A lot of the magic happens in the IDT and I’ll explain in a moment. So now we have the rushes in ACES, ready for archiving and distributing to the various departments. The data can be exported to any output device using the RRT and ODT. The ODT is the Output Device Transform, another LUT to convert the data for output devices like monitors and projectors. But before doing this technical conversion the RRT is applied. The Reference Rendering Transform is another LUT, but this one's not technical per se. Essentially the RRT applies a ‘look’ to the footage, but it’s a carefully crafted look. I’ll dig into that as well, but first let’s look at the IDT.

IDT

What makes the IDT special is that they are created by the camera manufacturers, but according to specifications designed by the academy. It's like the Academy set up a scene with a color card, had all the manufacturers shoot it and the told them each to make a LUT for their camera that transforms all the patches from the color card to predefined values in the ACES color space. That way the manufacturers don’t have to share their secrets with the world, and we get images from different cameras in roughly the same space. Of course differences remain between cameras as they are built differently, but this takes the guesswork out of it. You can now start to see how ACES standardizes workflows; the IDT is the first piece of the puzzle. As a VFX Artist you really shouldn’t have a lot to do with this, but it’s important to know what's going on, so you can properly decide how to work with the data you're given.

RRT

Now the RRT. As I said this is a ‘look’ that is applied before transforming to the output device. The look aims to simulate, to some extent, the effect of transferring to print film stock. What the Academy did was to interview many film professionals, DOPs, directors, etc., about what they would expect an (ungraded) image to look like and what they would want an image to look like. All of that feedback was turned into a sort of ‘generic look’ that is the RRT. Though this might sound a bit arbitrary, this is the other piece to the puzzle of standardization, because the RRT makes sure that what you see on your sRGB monitor looks about the same as what you see on the P3 projector.

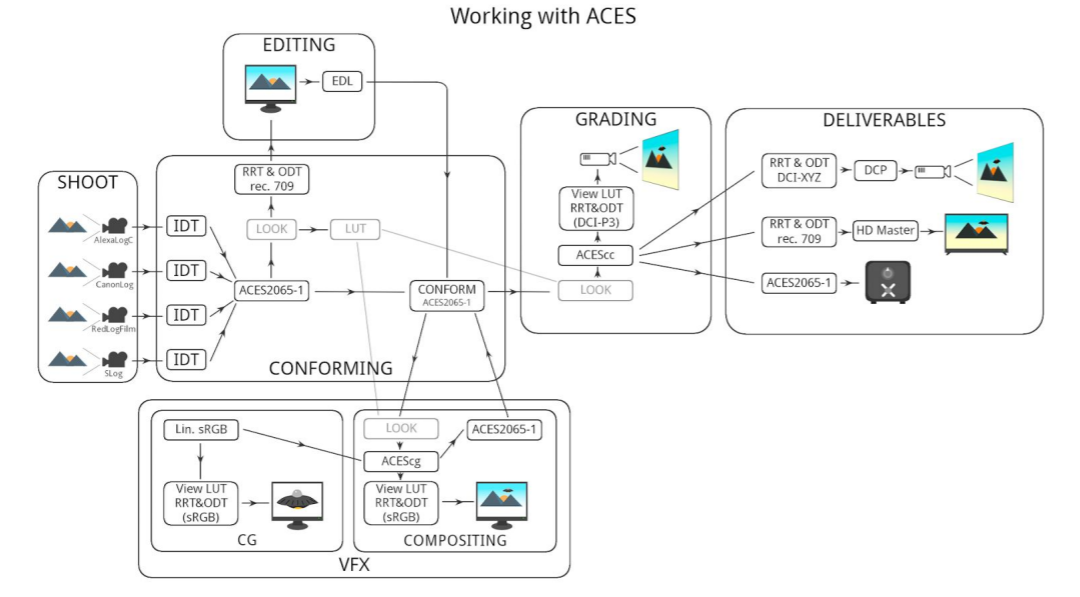

Working with ACES

AE

OpenColorIO plugin for After Effects

Project Settings - Color Settings:

Depth = 32 bits per channel (floating point)

Working Space = None

Interpret footage settings - Color Management:

Preserve RGB = on

Render out - Color Management: (ACES output)

Preserve RGB = on

Add an adjustment layer as the topmost layer and add the OCIO plugin. Set the input color space to match the working space set in the footage layer (ACEScg) and the output color space to match your output (for example AlexaLogC). This layer needs to be on top of any compositing operations. To enable a ViewLUT add another adjustment layer on top of the last one and add the OCIO plugin again. Set the input to the output space (AlexaLogC in this example) and the output to match your display device. Remember to disable this layer before your final render!

Values

A "1.0" pure white in ACES land is a value of 16, which is specular / light levels for wide dynamic ranges. In the normal real world there is no white that bright that isn't actually emitting light or a specular, the brightest albedo in nature is fresh snow which is still well under 1.0 at around a value of ~0.9

Some ACES OCIO LUTs Explained

ACES - ACES proxy

LDR color space that uses ACES PRIMARIES 1, for on set viewing with equipment that does not support

Output - sRGB (D60 sim.)

RRT+ sRGB ODT, shifted to 6000K to match typical cinema environment.

All the color spaces in the ‘Output’ category have the RRT applied.

ADX

Academy standard for film scans. Alternative to Cineon, although it doesn’t match exactly.

Utility LUTs

Utility - Rec709 - Camera: rec709 color space with a gamma of ~1.95 (in Nuke: rec709)

Utility - Linear - sRGB: Standard Linear Light working space (in Nuke: Linear)

Utility - sRGB - Texture: Standard sRGB color space (in Nuke: sRGB)

Utility - RAW: No color space conversion / disable ViewLUT (in Nuke: None)